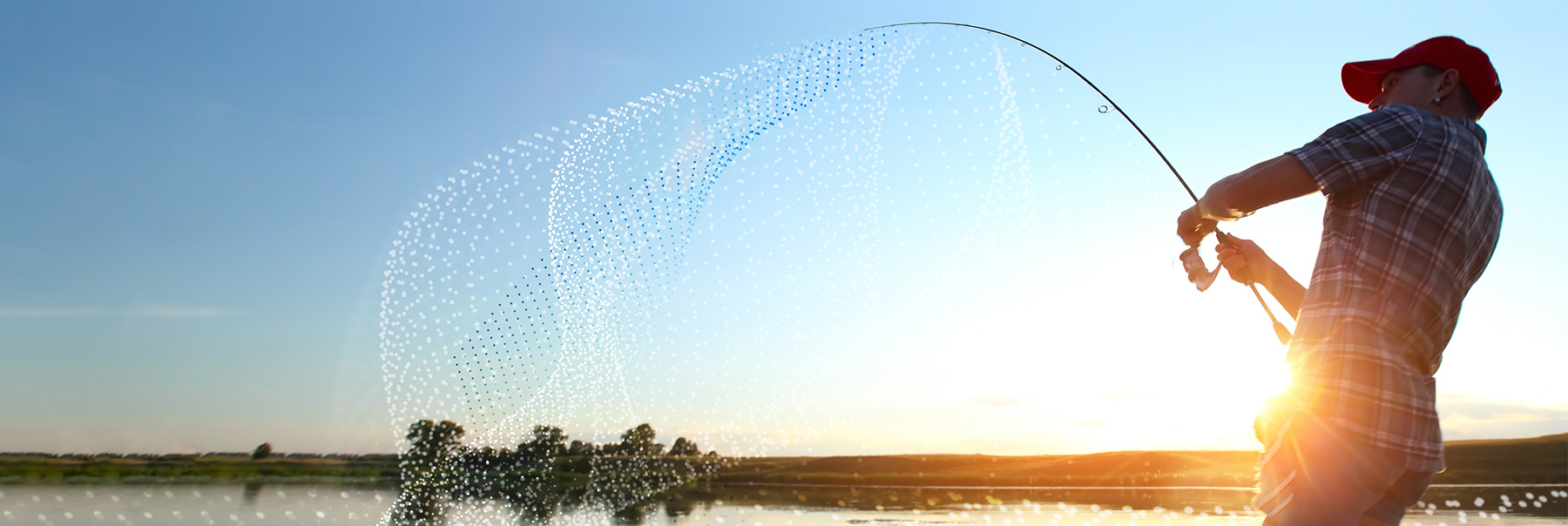

Want to catch the big fish? - Solving complex problems with software

Patrick Van Cauteren - September 14, 2020

The renowned movie producer, director, and writer David Lynch once said “Ideas are like fish. If you want to catch little fish, you can stay in the shallow water. But if you want to catch the big fish, you've got to go deeper.” Writing software isn’t that different.

My wife is a teacher in a small rural school. Many years ago, she asked me to write an application which would allow her and her colleagues to input exam results and print student reports. The task was not too difficult. In just a few days I had written a simple application in Microsoft Access Visual Basic. For me, the task was a small fish and I didn’t have to go very deep.

When standard libraries can’t do the trick

Blog post

Writing innovative supply chain planning software is different. OMP’s customers are big, Fortune 500 companies and our applications need to be able to handle gigabytes of data efficiently. Just using off-the-shelf libraries doesn’t always result in the most optimal solution. Standard libraries, like the C++ STL, are often designed to solve the more generic cases and are not necessarily the best option in specific cases. This is sometimes referred to as an example of the Golden Hammer Syndrome: if all you have is a hammer, everything looks like a nail. This doesn’t mean that generic libraries are not useful, they are (there are still a lot of small fish to catch), but if the business problem gets bigger or more complex, more specialized techniques are needed. To catch a big fish, you have to go deep, sometimes very deep.

Blog post

Challenges we encounter every day are:

- How do you manage customer data in such a way that the memory impact is kept to a reasonable level?

- Which data structures deliver the fastest results, with the smallest memory footprint?

- How do you write an algorithm that gives the customer the best result, within a reasonable timeframe?

- How do you write an algorithm that doesn’t wind up 100 times slower when the dataset is 10 times bigger?

Avoiding O(logN) and other performance problems

Here’s a quick example to illustrate this. Suppose you have a million demands and different parts of the application need to store additional, temporary information for each of these demands. Two typical approaches are:

- Just add extra data fields to the demand data structure. Although this allows the software to quickly access the extra data, it’s not a good solution design-wise (the data could leak to parts of the software which should not be able to access it) and it increases memory consumption even when the module needing to store the extra data is not active.

- Store the extra information in a hash or a map structure. Design-wise this is a better solution, but these structures tend to have a somewhat large memory footprint because they need to store additional bookkeeping data structures. Also, looking up information in a hash or map incurs extra performance overhead, like executing the hash-function, comparing potential matches or logarithmic lookups (what software engineers call O(logN)).

Instant access to gigabyte-sized data sets

Blog post

Another way to add extra information is to assign a numeric handle to every demand. By carefully choosing the value of the numeric handle and adding extra lifetime information (is the handle still valid?), information can be stored in a simple vector. Looking up information then becomes a simple indexing operation (what software engineers call O(1)). This is just one of the innovative data structures we use at OMP to deliver efficient applications that can work on gigabytes of data.

You can catch little fish using tools just from memory. Simple utilities or simple scripts behind a web page may not require in-depth knowledge, but if you want to solve the bigger problems, deeper investigation and innovative ideas are needed. This means reading scientific IT-related articles, designing new data structures, adapting existing data structures for gigabyte-sized data sets, and implementing new and innovative algorithms.

Blog post

Patrick Van Cauteren

Senior Software Architect at OMP BE

Biography

With more than 30 years’ experience in software development, finding innovative solutions for complex problems is part of Patrick’s DNA. As a Senior Software Architect, he focuses on making sure the OMP solution fits all environments.